AI is predominantly changing the way healthcare enterprises are making real-clinical decisions, since it is more involved in the process. Diagnostic tools flagging cancer, predictive models for triaging emergencies, and automated workflows for drug approvals are all examples of how AI’s role in healthcare has expanded faster than the governance structure meant to monitor it.

However, these enterprises fail to answer whether their AI is actually governed. This gap creates liability, safety risks for patients, and scrutiny from boards that many health systems are not ready to handle. Regulatory audits do not wait for organizations to catch up. Instead, when a model fails and no one can explain why, the lack of governance can quickly become very costly.

Intellivon brings enterprise-grade expertise in healthcare AI infrastructure, compliance architecture, and responsible AI deployment, helping health systems build the oversight layer that matches the scale of AI already running inside their organization. In this blog, we will explore how we prepare your AI governance platform for the future as both technology and compliance standards change.

Why Healthcare Enterprises Need AI Governance Platforms Now

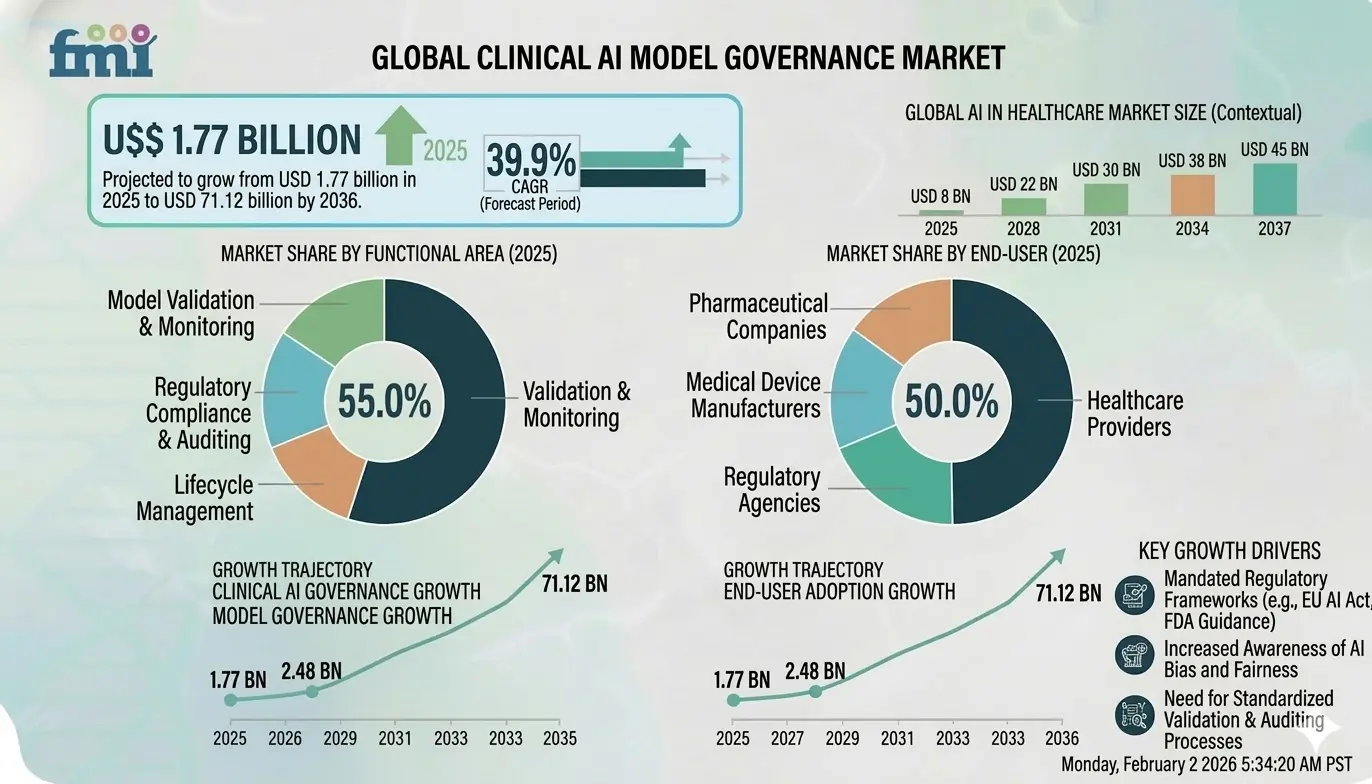

Healthcare enterprises are rapidly adopting AI. However, strict regulations, privacy risks, and bias concerns are increasing the need for strong governance. As a result, hospitals need platforms that monitor AI models and ensure safe, compliant deployment. The clinical AI governance market reflects this urgency, driven by regulation and enterprise-scale AI adoption.

In fact, the AI governance market is expected to grow from USD 1.77 billion in 2025 to USD 2.48 billion in 2026, and could reach USD 71.12 billion by 2036, reflecting a 39.9% CAGR during the forecast period.

The rapid shift toward clinical automation has outpaced traditional oversight, creating a critical need for structured management. Decision-makers must now implement governance to scale these innovations without compromising patient safety or ROI.

1. Accelerating AI Adoption

Healthcare organizations are moving beyond small pilots toward deep operational integration. Systems now manage everything from ER triaging to automated billing.

However, this shift creates a fragmented ecosystem of “black box” solutions. Therefore, a unified governance strategy is essential to maintain operational coherence and long-term financial stability.

2. AI Introduces New Regulatory Risks

New standards focus heavily on algorithmic transparency and mitigating data bias. A lack of documented oversight leads to significant legal exposure. Furthermore, models can “drift” into non-compliance as patient data shifts.

Adopting a robust governance layer is the most effective way to insulate the enterprise from these emerging liabilities.

3. Traditional compliance tools fail for AI systems

Static checklists work for traditional software but fail for probabilistic AI. Manual reporting is simply too slow for real-time processing speeds.

Consequently, relying on outdated methods creates a “governance gap” where risky models operate without supervision. Transitioning to automated platforms is the only way to bridge this technical divide.

4. Governance platforms reduce clinical AI risk

A dedicated platform acts as a continuous safety net, providing dashboards for model health and fairness metrics. It facilitates seamless collaboration between clinical experts and technical teams through shared risk scores.

Ultimately, this reduces “time-to-value” for AI investments while significantly lowering the cost of failure.

Implementing these platforms transforms AI from a risky experiment into a controlled, scalable enterprise asset. This strategic foundation is the only way to ensure your technological investments deliver sustainable growth and clinical excellence.

What Is a Healthcare AI Governance Platform?

A healthcare AI governance platform is a centralized management layer designed to oversee the entire lifecycle of medical algorithms. It provides automated tools for monitoring model accuracy, ensuring data privacy, and detecting algorithmic bias in real-time.

By integrating compliance workflows with technical performance tracking, the platform allows leaders to manage clinical risks while maintaining transparency across complex, high-stakes enterprise environments.

AI Governance vs Traditional Healthcare Compliance

Healthcare organizations have long relied on compliance frameworks to protect patient data and meet regulatory requirements. However, the rise of clinical AI systems introduces a new layer of operational risk. Traditional compliance programs were not designed to oversee machine learning models that continuously evolve after deployment.

AI governance platforms address this gap. Instead of focusing only on data privacy and reporting, they provide continuous oversight across the entire AI lifecycle. As a result, hospitals can monitor bias, detect model drift, and maintain transparency in automated clinical decisions.

In practice, both approaches are necessary. Traditional compliance ensures regulatory adherence, while AI governance ensures that clinical AI systems remain safe, ethical, and accountable in real-world environments.

Key Differences Between the Two Approaches

| Category | AI Governance Platforms | Traditional Healthcare Compliance |

| Primary Focus | Oversight of AI models, algorithms, and automated decisions | Protection of patient data and regulatory reporting |

| Risk Monitoring | Continuous monitoring of bias, drift, and performance | Periodic audits and policy reviews |

| Decision Transparency | Provides explainability for AI-driven clinical outcomes | Limited transparency for automated systems |

| Scope of Oversight | Covers the full AI lifecycle from development to deployment | Focuses mainly on data handling and documentation |

| Compliance Automation | Generates real-time audit trails and regulatory reports | Relies heavily on manual documentation |

| Operational Integration | Integrated with AI pipelines and clinical decision tools | Operates as a separate governance function |

| Adaptability | Adjusts governance rules as models evolve | Compliance rules remain relatively static |

Traditional healthcare compliance remains essential for protecting patient data and meeting regulatory obligations. However, it does not provide oversight for how AI systems behave in clinical environments.

AI governance platforms fill this gap by monitoring models continuously, ensuring transparency in clinical decisions, and automating regulatory reporting. Consequently, healthcare organizations can deploy AI confidently while maintaining regulatory compliance and patient safety.

For hospitals adopting clinical AI at scale, combining traditional compliance frameworks with dedicated AI governance infrastructure is quickly becoming a strategic necessity.

Core Capabilities of Healthcare AI Governance Platforms

Modern governance platforms move beyond basic monitoring to provide a robust, end-to-end framework for algorithmic accountability. These core features ensure your AI investments remain safe, transparent, and fully aligned with clinical objectives.

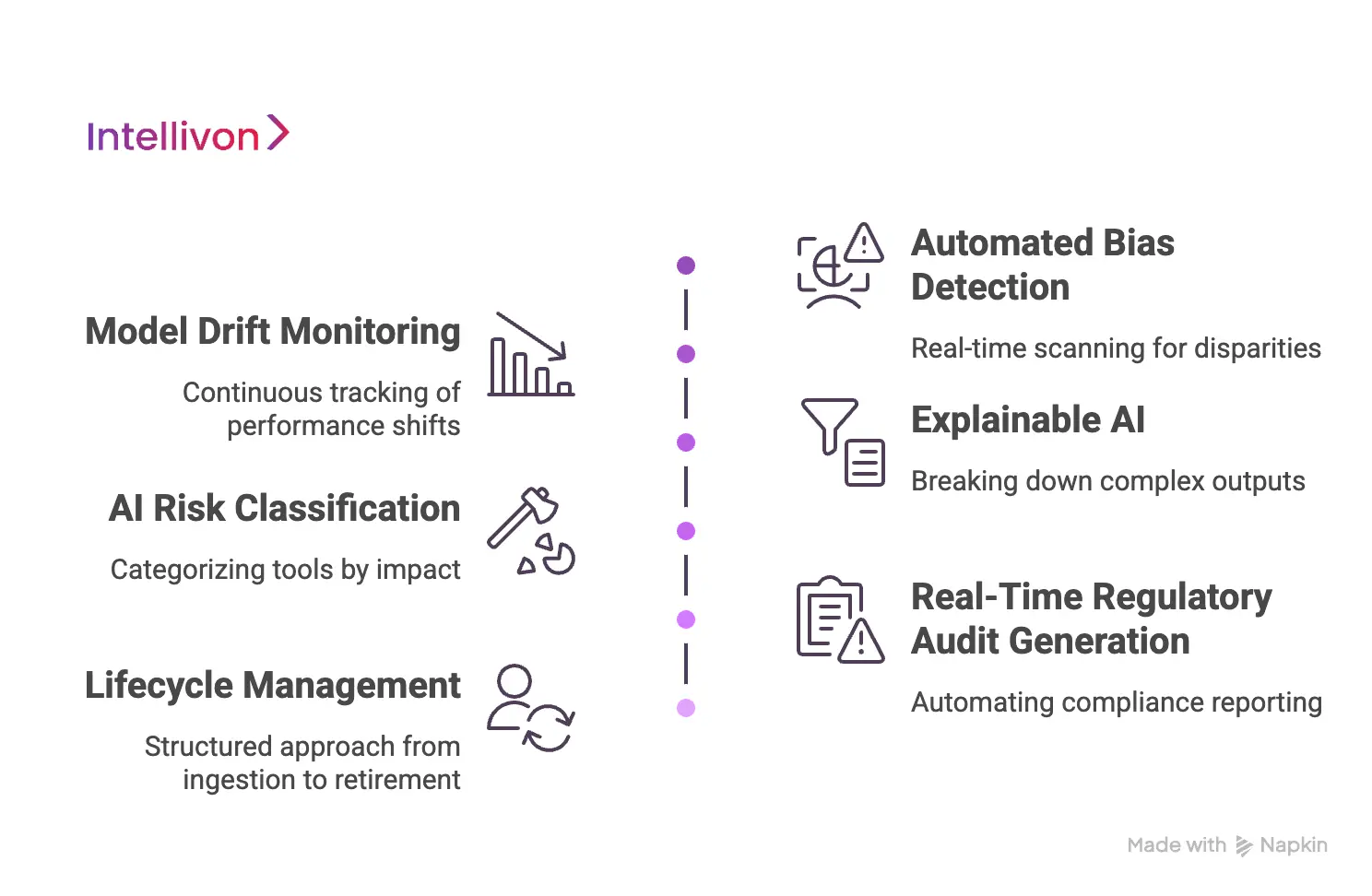

1. Automated Bias Detection

Algorithms can inadvertently inherit prejudices from historical patient data, leading to skewed treatment recommendations. Governance platforms utilize automated scripts to scan for these disparities across demographic groups in real-time.

By identifying bias during the validation phase, leaders can correct models before they impact patient care or institutional reputation.

2. Model Drift Monitoring

A model’s accuracy often degrades as the underlying clinical environment changes over time. Continuous monitoring tracks these performance shifts, alerting technical teams when a system no longer meets its original precision standards.

Therefore, your enterprise avoids relying on outdated logic that could compromise diagnostic accuracy.

3. Explainable AI

Black-box algorithms are a significant liability in high-stakes medical settings where doctors must justify every decision. Explainable AI (XAI) features break down complex neural outputs into human-readable insights.

This clarity builds trust among clinicians and ensures that every AI-assisted diagnosis is backed by traceable logic.

4. AI Risk Classification and Model Tiering

Not every algorithm carries the same level of institutional risk. These platforms categorize AI tools, from administrative chatbots to diagnostic imaging, based on their potential impact on patient outcomes. This tiering allows executives to prioritize oversight resources where they are most needed for safety.

5. Real-Time Regulatory Audit Generation

Manual compliance reporting is a massive resource drain that often results in outdated documentation. Governance systems automate this process by generating detailed audit trails of model performance and human interventions.

Consequently, your organization stays “audit-ready” for unexpected inquiries from health authorities without manual labor.

6. Lifecycle Management for Healthcare AI Models

Effective governance covers the journey from initial data ingestion to final model retirement. This structured approach ensures that every version of an algorithm is logged, tested, and properly decommissioned when it becomes obsolete.

Maintaining this lifecycle history is critical for long-term accountability and strategic planning.

By integrating these features, organizations create a self-correcting ecosystem that grows stronger with every deployment. This technical maturity is the primary differentiator between experimental pilots and scalable, enterprise-grade AI operations.

Global Regulations Driving AI Governance in Healthcare

The global regulatory landscape for medical algorithms is shifting from flexible guidelines to strict, enforceable mandates. Organizations must proactively align their digital infrastructure with these international standards to avoid market exclusion and significant financial penalties.

1. HIPAA Compliance for AI Systems

Protecting patient privacy remains the bedrock of medical technology, but AI introduces new vulnerabilities in data de-identification. Governance platforms ensure that training sets and model outputs do not inadvertently leak protected health information (PHI).

Therefore, maintaining strict HIPAA compliance requires automated encryption and access controls that track every interaction with sensitive datasets.

2. EU AI Act Impact

The European Union’s recent framework classifies most healthcare AI as “high-risk,” demanding rigorous pre-market testing and human oversight. This regulation sets a global precedent for how algorithmic transparency and data quality must be documented.

Consequently, enterprises operating internationally must adopt standardized governance to meet these stringent documentation and safety requirements.

3. FDA Guidance

The FDA is increasingly focused on the “Total Product Lifecycle” approach for software as a medical device (SaMD). This guidance emphasizes the need for continuous monitoring of algorithms after they are deployed in clinical settings.

A dedicated platform simplifies this by capturing the performance data necessary to satisfy federal reviewers during periodic audits.

4. Data Governance Requirements

Effective AI is impossible without high-quality, ethically sourced data that meets regional residency requirements. Governance frameworks enforce “data lineage,” which tracks exactly where information comes from and how it is processed.

This transparency is vital for satisfying both legal investigators and internal quality assurance teams during large-scale deployments.

Navigating these complex legal waters demands a technical solution that encodes compliance into the software itself. This proactive stance ensures your enterprise remains agile while fulfilling its ethical and legal obligations.

AI Governance Frameworks Used in Healthcare AI Systems

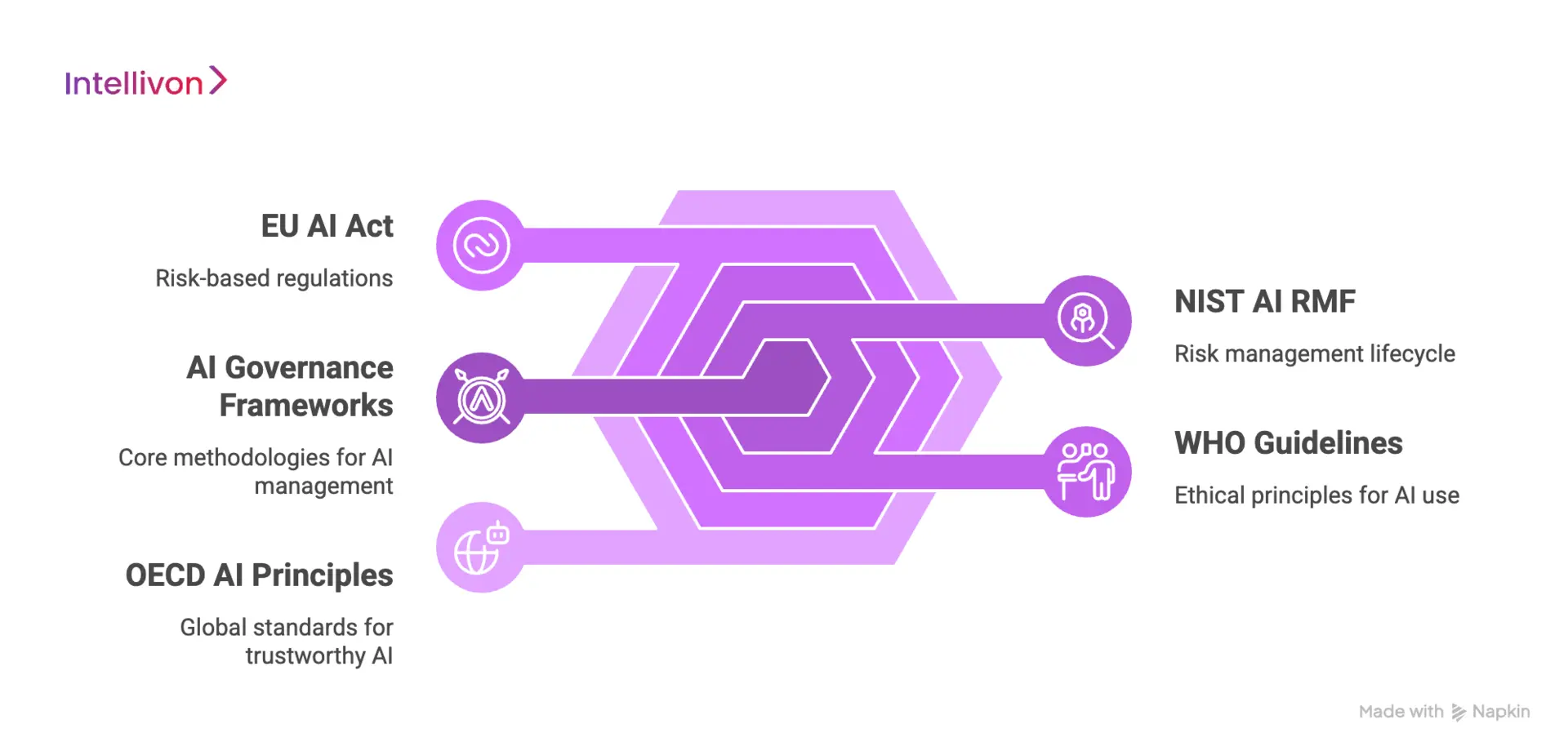

Adopting recognized global frameworks is no longer optional for enterprises seeking institutional trust and regulatory approval.

These structured methodologies provide the “blueprint” for managing high-stakes algorithmic risks in complex medical environments.

1. NIST AI Risk Management Framework

The NIST AI RMF offers a voluntary but highly influential methodology focused on four core functions:

- Govern

- Map

- Measure

- Manage.

In a clinical context, “Mapping” involves documenting how a diagnostic tool affects specific patient demographics to identify latent risks. By following this lifecycle approach, hospitals can move from ad-hoc testing to a repeatable, evidence-based culture of algorithmic safety.

2. WHO guidelines

The World Health Organization emphasizes six consensus principles, prioritizing human autonomy and equitable access to technology. These guidelines demand that AI systems supplement rather than replace clinical judgment, ensuring that “human-in-the-loop” oversight remains a core requirement.

Consequently, enterprises adhering to WHO standards demonstrate a commitment to global health ethics that resonates with international stakeholders.

3. EU AI Act risk tiers

The EU AI Act classifies most medical diagnostic and surgical AI as “High-Risk,” triggering strict mandatory obligations for data quality and human oversight.

Systems that utilize subliminal manipulation or social scoring are categorized as “Unacceptable Risk” and are prohibited entirely. Therefore, understanding these tiers is critical for any enterprise looking to deploy solutions within the European single market.

4. OECD AI principles

The OECD principles serve as a global benchmark for trustworthy AI, focusing on transparency, robustness, and accountability. These standards encourage organizations to disclose how their models function and what data sources were used to train them.

Adopting these principles signals to investors that your platform is built on a foundation of long-term sustainability and international cooperation.

How Governance Platforms Implement These Frameworks

Enterprise-grade platforms translate these high-level conceptual frameworks into actionable technical workflows. They automate the data collection required for NIST “Measurement” and generate the transparency reports mandated by the EU AI Act.

Ultimately, this automation bridges the gap between abstract ethical principles and the daily operational realities of a healthcare IT environment.

Aligning with these frameworks transforms regulatory compliance from a burden into a strategic asset. This rigorous approach ensures your AI initiatives are not only legally sound but also ethically responsible and commercially viable.

Key Features to Build Into an AI Governance Platform

Building a resilient governance layer requires moving beyond simple monitoring toward a comprehensive command center for algorithmic assets. These technical features ensure that every model in your ecosystem remains visible, compliant, and performing at peak efficiency.

1. Enterprise AI Asset Inventory and Tracking

Most large healthcare organizations struggle with “shadow AI,” where departments deploy localized tools without central oversight. A robust platform maintains a real-time inventory of every model, its training data sources, and its current deployment status.

Therefore, leaders gain a single source of truth that simplifies asset management and resource allocation across the entire enterprise.

2. Automated Model Validation Workflows

Manual validation is a bottleneck that delays the rollout of critical clinical tools. Automated workflows standardize the testing process, ensuring every algorithm meets predefined accuracy and safety benchmarks before it enters production.

This consistency eliminates human error and guarantees that only high-quality, verified models interact with patient data.

3. Continuous Monitoring for Bias and Drift

Healthcare environments are dynamic, and a model that works in a suburban hospital may fail in an urban clinic due to demographic shifts. Continuous monitoring tools scan live data streams to detect performance “drift” or emerging biases immediately.

Consequently, your technical teams can retrain or pause underperforming models before they lead to clinical errors.

4. Explainability Dashboards for Clinicians

For AI to be adopted, doctors must understand why a specific recommendation was made. Explainability dashboards translate complex mathematical weights into intuitive visualizations that highlight which clinical factors influenced the AI’s output.

This transparency builds the necessary trust for clinicians to integrate machine learning into their daily diagnostic workflows.

5. Compliance Reports for Regulators and Auditors

Preparing for a regulatory audit shouldn’t take weeks of manual data gathering. An effective platform generates “audit-ready” reports at the click of a button, documenting every model change and human approval.

This proactive documentation reduces the administrative burden on legal teams and ensures the organization can survive surprise inspections with ease.

6. Role-based Governance Controls and Approvals

Governance is a team sport that involves IT, legal, and medical staff. Role-based access controls ensure that only authorized personnel can move a model from “testing” to “live” status.

By enforcing these digital signatures and approval gates, the enterprise maintains strict accountability and prevents unauthorized changes to sensitive clinical software.

Integrating these features transforms your AI strategy from a collection of isolated tools into a synchronized, high-performance engine. This level of technical maturity is what allows an organization to lead the market rather than just react to it.

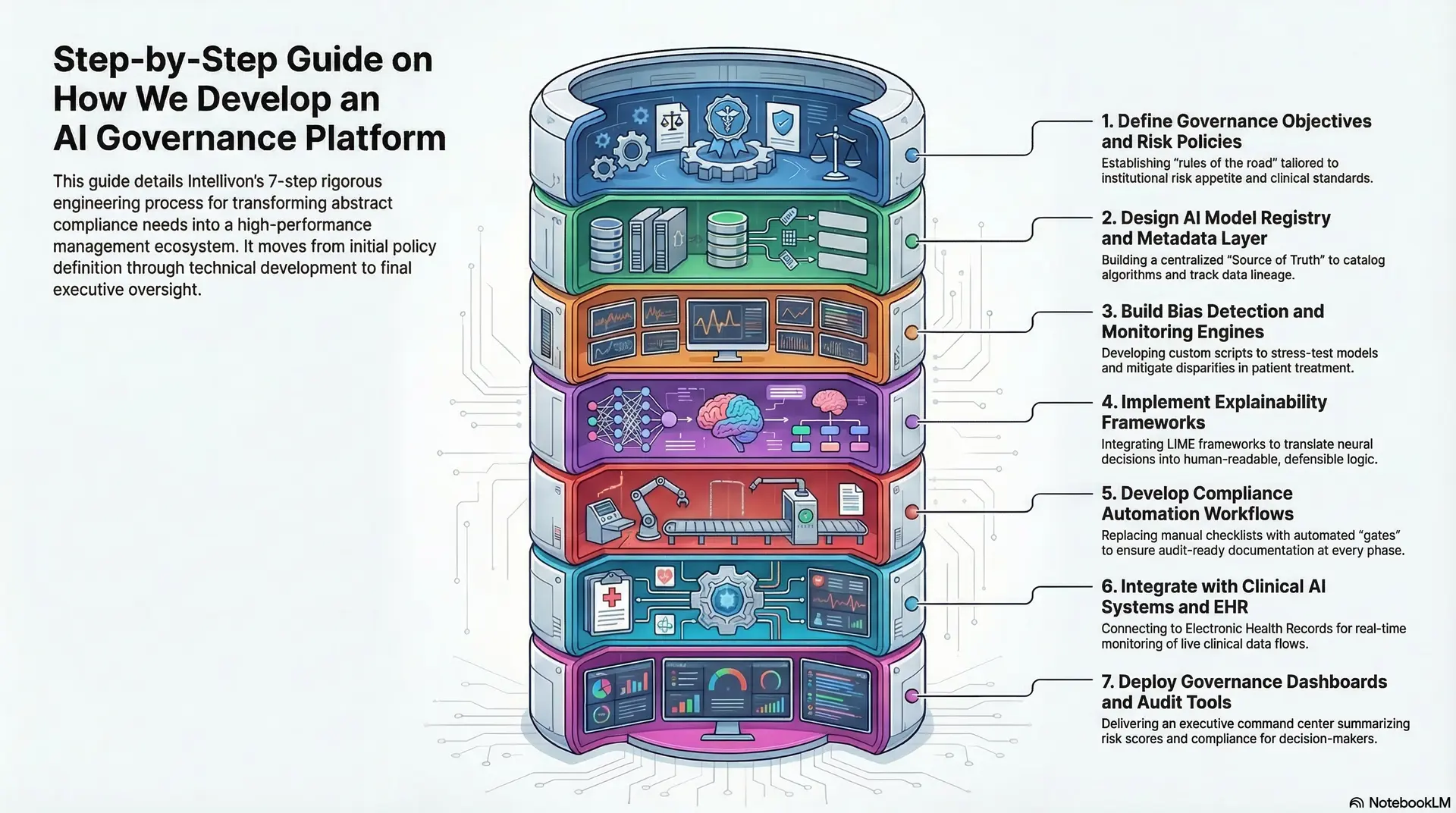

Step-by-Step Guide on How We Develop an AI Governance Platform

Building a resilient governance infrastructure requires a methodical approach that balances technical precision with high-level business logic. At Intellivon, we follow a rigorous engineering roadmap to transform abstract compliance needs into a high-performance management ecosystem.

1. Define Governance Objectives and Risk Policies

We begin by establishing the “rules of the road” tailored to your institutional risk appetite. This involves identifying specific clinical safety standards, legal mandates, and ethical boundaries your AI must never cross.

Therefore, we ensure the platform is not just a technical tool, but a direct reflection of your corporate strategy and values.

2. Design AI Model Registry and Metadata Layer

Our engineers build a centralized “Source of Truth” that catalogs every algorithm across your enterprise. This metadata layer tracks model versions, training data lineage, and developer credentials in real-time.

By creating this visibility, we eliminate the risks associated with “shadow AI” and ensure every asset is fully accounted for.

3. Build Bias Detection and Monitoring Engines

We develop custom scripts that continuously stress-test your models against diverse demographic datasets. These engines detect “protected attribute” disparities that could lead to unfair patient treatment or legal liability.

Consequently, your organization can proactively mitigate bias before it manifests in a clinical setting or results in a regulatory fine.

4. Implement Explainability Frameworks

Transparency is non-negotiable in medicine, so we integrate advanced “Local Interpretable Model-agnostic Explanations” (LIME) or similar frameworks. These tools break down complex neural decisions into human-readable insights for clinicians.

This step ensures that every AI recommendation is backed by a clear, defensible logic that healthcare professionals can trust.

5. Develop Compliance Automation Workflows

We replace manual checklists with automated “gates” that a model must pass before deployment. These workflows generate standardized documentation for every phase of the machine learning lifecycle.

This automation ensures your enterprise remains “audit-ready” at all times, significantly reducing the administrative burden on your legal and IT departments.

6. Integrate with Clinical AI Systems and EHR

A governance platform is only effective if it talks to your existing infrastructure. We specialize in deep integration with Electronic Health Records (EHR) and existing clinical AI tools to monitor live data flows.

This connectivity allows for real-time intervention if a model starts to deviate from its safety benchmarks during actual patient care.

7. Deploy Governance Dashboards and Audit Tools

The final stage is the delivery of a high-level executive command center. We provide intuitive dashboards that summarize risk scores, model health, and compliance status for non-technical decision-makers.

These tools empower you to oversee a massive technological landscape with total clarity and confidence.

Executing this roadmap requires a partner who understands both the code and the clinical consequences. By following this disciplined process, we ensure your AI initiatives are built on a foundation of safety, transparency, and long-term scalability.

Common Challenges in AI Governance Platform Development

Deploying a governance layer within a sprawling healthcare enterprise often uncovers deep-seated technical and operational hurdles. Identifying these friction points early is essential for building a system that actually works in a high-stakes clinical environment.

1. Fragmented AI Models Across Hospital Systems

Large healthcare networks often suffer from “departmental silos” where different units deploy isolated AI tools without central coordination. This fragmentation makes it nearly impossible for leadership to assess total institutional risk or maintain consistent safety standards.

How we solve it: We implement a unified discovery layer that scans your network to inventory every active model. By centralizing these assets into a single management console, we provide total visibility and control over your entire algorithmic footprint.

2. Limited Explainability In Clinical AI Models

Many advanced neural networks operate as “black boxes,” providing accurate results without explaining the underlying clinical logic. This lack of transparency prevents doctors from fully trusting the technology and creates massive liability during medical reviews.

How we solve it: Our team integrates model-agnostic explainability frameworks that translate complex data weights into intuitive visualizations. This allows clinicians to see exactly which patient factors influenced a diagnosis, ensuring every decision is defensible and transparent.

3. Complex Regulatory Requirements Across Regions

Navigating the overlapping demands of HIPAA, the EU AI Act, and evolving FDA guidelines is a significant burden for internal legal teams. Keeping up with these changes manually often leads to compliance gaps and increased risk of heavy financial penalties.

How we solve it: We build “regulatory-aware” engines into the platform that automatically update compliance checklists as laws evolve. This ensures your documentation is always aligned with the latest global standards without requiring constant manual intervention.

4. Integrating governance into existing AI pipelines

Forcing data scientists to change their entire workflow to accommodate governance often leads to friction and slowed innovation. Traditional oversight methods frequently act as a “bottleneck” rather than an enabler for rapid deployment.

How we solve it: We design our governance tools to sit “on top” of your current DevOps pipelines through seamless API integrations. This allows your team to keep using their preferred tools while the platform captures the necessary oversight data in the background.

Overcoming these obstacles requires a strategic balance between rigid oversight and operational flexibility. By addressing these challenges head-on, you turn governance from a restrictive hurdle into a robust foundation for scalable innovation.

How AI Governance Improves Patient Safety

The ultimate metric for any healthcare technology is its impact on patient outcomes. Effective governance ensures that as algorithms take on more clinical responsibility, they remain subservient to the core medical principle of “first, do no harm.”

1. Reducing Bias in Diagnostic Algorithms

Biased algorithms can lead to systemic healthcare disparities, such as underdiagnosing conditions in specific ethnic groups or overlooking symptoms in certain genders.

Governance platforms utilize automated parity testing to identify these inequities before they result in clinical errors. Therefore, your enterprise ensures that life-saving diagnostics are applied fairly and accurately across your entire patient population.

2. Preventing Unsafe Model Drift in Clinical AI

A model that achieves 99% accuracy in a lab may degrade quickly when exposed to the messy, real-world data of a busy hospital. Continuous monitoring acts as an early-warning system, alerting staff the moment a model’s precision begins to “drift” from its safety baseline.

Consequently, you prevent outdated or malfunctioning logic from influencing critical treatment plans.

3. Increasing Clinician Trust in AI Systems

Doctors are understandably hesitant to follow recommendations from a system they cannot understand. Governance platforms bridge this gap by providing clear transparency into how an AI reached its conclusion.

This open-book approach empowers clinicians to use AI as a reliable co-pilot, leading to more confident decision-making and better patient adherence to suggested treatments.

4. Ensuring Accountability in AI-Driven Care

When an automated system contributes to a clinical decision, there must be a clear trail of who authorized the model and what data it used. Governance frameworks provide a permanent, unalterable record of the AI’s lifecycle and every human intervention.

This accountability is vital for maintaining institutional integrity and ensuring that patient safety remains the primary focus of every technological advancement.

By prioritizing these safety guardrails, healthcare leaders can innovate with a clear conscience. This commitment to safety builds the long-term institutional trust necessary for AI to truly transform modern medicine

Cost to Build a Healthcare AI Governance Platform

At Intellivon, healthcare AI governance platforms are engineered as enterprise risk infrastructure, not as monitoring dashboards layered onto clinical AI tools. The objective is to create a centralized system that oversees the entire AI lifecycle across hospitals and healthtech organizations.

However, building an enterprise AI governance platform requires more than integrating model monitoring tools. The platform must track AI assets, monitor bias and drift, generate regulatory audits, and maintain explainability across clinical decision systems.

When implemented correctly, these platforms give healthcare organizations the ability to deploy AI safely while maintaining regulatory compliance and clinical accountability.

Estimated Phase-Wise Development Cost

| Phase | Description | Estimated Cost Range (USD) |

| Discovery & Governance Framework Design | Define AI governance policies, risk tiers, compliance requirements, and oversight workflows across clinical AI systems | $6,000 – $12,000 |

| AI Monitoring & Compliance Infrastructure | Build model monitoring systems, bias detection pipelines, drift detection engines, and compliance logging architecture | $10,000 – $22,000 |

| Explainability & Transparency Systems | Implement model interpretability frameworks and clinician-facing explainability dashboards for clinical decision transparency | $8,000 – $18,000 |

| AI Model Registry & Lifecycle Management | Develop a centralized registry to track AI models, training data, versions, and deployment status across healthcare systems | $7,000 – $15,000 |

| Risk Tiering & Governance Controls | Implement risk classification frameworks, approval workflows, and governance rules for different AI risk categories | $6,000 – $14,000 |

| Healthcare System Integrations | Integrate with EHR systems, clinical AI pipelines, medical imaging platforms, and hospital data infrastructure | $10,000 – $25,000 |

| Security & Healthcare Data Protection | Implement encryption, access governance, secure logging, and healthcare-grade data protection systems | $6,000 – $12,000 |

| Testing & Regulatory Validation | Conduct governance testing, audit simulations, compliance validation, and platform performance optimization | $4,000 – $9,000 |

| Deployment & Infrastructure Setup | Configure cloud infrastructure, monitoring systems, and operational governance dashboards | $4,000 – $8,000 |

Total Initial Investment

$60,000 – $160,000

Ongoing maintenance and governance updates typically require 15–20% of the initial development cost per year.

These costs support continuous monitoring, compliance updates, and platform optimization as AI models evolve.

Timeline to Launch an AI Governance Platform

The timeline depends on the number of AI systems being governed and the complexity of healthcare integrations.

| Phase | Estimated Timeline |

| Governance strategy & compliance mapping | 2 – 3 weeks |

| Platform architecture & monitoring systems | 3 – 4 weeks |

| AI model integrations & governance workflows | 4 – 6 weeks |

| Compliance testing & validation | 3 – 4 weeks |

| Deployment & operational setup | 2 – 3 weeks |

Typical development timeline: 3 – 5 months for an enterprise healthcare AI governance platform.

Hidden Costs Healthcare Organizations Should Plan For

Even well-designed AI governance platforms encounter operational challenges if indirect costs are ignored.

- Regulatory updates require continuous adjustments as healthcare AI regulations evolve globally.

- Infrastructure scaling becomes necessary as hospitals deploy more AI models and data volumes increase.

- AI monitoring costs rise as bias detection and drift monitoring operate continuously.

- Integration complexity increases when connecting multiple EHR systems and clinical AI pipelines.

- Operational training becomes essential for compliance teams, data scientists, and clinical leadership.

Best Practices to Avoid Budget Overruns

Based on Intellivon’s experience building enterprise AI governance systems, several strategies help organizations control development costs.

- Define AI governance policies and risk frameworks before platform development begins.

- Inventory all AI models across departments early to avoid governance blind spots.

- Design modular monitoring systems that allow new AI models to be governed easily.

- Integrate explainability and compliance automation directly into the platform architecture.

- Ensure the platform supports regulatory reporting across multiple jurisdictions.

- Maintain observability across AI pipelines, governance workflows, and clinical systems.

Organizations planning to build a healthcare AI governance platform can work with Intellivon’s AI and healthcare infrastructure experts to design a roadmap aligned with clinical AI strategy, regulatory requirements, and long-term platform scalability.

Conclusion

Building a healthcare AI governance platform is a strategic necessity. By prioritizing transparency and safety, you transform high-risk innovations into reliable growth engines.

This proactive approach mitigates liability while fostering institutional trust and clinical excellence. Ready to secure your AI future? Partner with Intellivon, the leaders in providing cutting-edge, enterprise-grade AI solutions tailored for the most demanding healthcare environments.

Build a Healthcare AI Governance Platform With Intellivon

At Intellivon, healthcare AI governance platforms are engineered as enterprise oversight infrastructure, not as monitoring dashboards layered onto existing AI tools. The goal is to create a centralized governance system that supervises the entire lifecycle of clinical and operational AI across healthcare organizations.

Healthcare AI environments are complex. Governance platforms must oversee diagnostic models, predictive analytics systems, and operational AI tools while maintaining transparency, patient safety, and regulatory compliance. As a result, the platform must integrate with clinical systems, monitor AI behavior continuously, and generate compliance-ready audit trails for regulators.

Why Partner With Intellivon?

- Enterprise AI Governance Architecture: We design centralized governance platforms that track AI assets, manage model lifecycle oversight, and enforce governance policies across healthcare AI deployments.

- AI Monitoring and Risk Management Systems:Our platforms include automated bias detection, drift monitoring engines, and performance tracking systems to ensure clinical AI models remain safe and reliable.

- Explainable AI Infrastructure: We implement explainability frameworks that allow clinicians and regulators to understand how AI models generate predictions, recommendations, and clinical decisions.

- Regulatory Compliance and Audit Systems: Every governance platform includes automated compliance reporting, risk tiering frameworks, and regulatory audit trails aligned with HIPAA, EU AI Act, and healthcare regulations.

- Healthcare System Integrations: We integrate governance platforms with EHR systems, clinical AI pipelines, medical imaging platforms, and healthcare data infrastructure to maintain continuous oversight.

- Secure Healthcare Data Infrastructure: Our platforms implement encryption, access governance, and healthcare-grade security architecture to protect patient data and AI model operations.

- Scalable Cloud-Native Governance Platforms: Healthcare AI governance platforms are deployed on scalable cloud infrastructure designed to monitor multiple AI systems, manage governance workflows, and support enterprise healthcare environments.

Organizations planning to build a healthcare AI governance platform can partner with Intellivon’s healthcare AI experts to design and deploy governance infrastructure aligned with clinical safety requirements, regulatory compliance, and long-term AI strategy.

FAQs

Q1. What is an AI governance platform in healthcare?

A1. A healthcare AI governance platform is a centralized system that monitors, audits, and manages AI models used in clinical and operational environments. It helps hospitals detect bias, track model performance, and maintain regulatory compliance.

Q2. Why do hospitals need AI governance platforms?

A2. Hospitals use AI governance platforms to ensure clinical AI systems remain safe, transparent, and compliant. These platforms monitor bias, detect model drift, and generate audit reports for regulators.

Q3. How do AI governance platforms detect bias in healthcare AI?

A3. AI governance platforms continuously analyze model outputs and training data. They use statistical monitoring systems to identify unfair patterns or biased predictions that may affect clinical decisions.

Q4. What regulations affect healthcare AI systems?

A4. Healthcare AI systems must comply with regulations such as HIPAA, the EU AI Act, FDA guidance for medical software, and regional healthcare data protection laws.

Q5. How long does it take to build a healthcare AI governance platform?

A5. Building an enterprise healthcare AI governance platform typically takes 3–5 months, depending on the number of AI systems, integrations with hospital infrastructure, and regulatory requirements.