Key Takeaways:

- AI radiology software triggers FDA, EU MDR, HIPAA, GDPR, and cybersecurity requirements the moment it influences clinical decisions.

- Regulatory planning must start before development, as architecture decisions determine approval timelines and market entry.

- Explainability, bias testing, human review, and post-market drift monitoring are regulatory requirements, not optional features.

- Build costs range from $50,000 for a basic MVP to $280,000 and above for an enterprise platform with compliance controls.

- How Intellivon builds regulatory-ready AI radiology software your enterprise fully owns, with secure pipelines, audit workflows, and model monitoring from day one.

Radiology has always focused on precision. However, with the introduction of AI in the reading room, the discussion has shifted from clinical accuracy to legal responsibility. Hospitals and health tech companies are quickly working to integrate AI into diagnostic imaging workflows. Faster reads, fewer missed diagnoses, and less radiologist burnout make a compelling case. Yet, the regulatory process for deploying these tools commercially often causes many projects to stall.

The FDA, CE marking bodies, and international health authorities are not blocking innovation. They do require that each AI radiology product provide proof of its effectiveness through a structured and often lengthy approval process. Understanding these requirements is essential for creating a defendable, scalable, and investable product.

At Intellivon, we create compliant cloud architectures specifically designed for imaging AI. We have witnessed how regulatory readiness can distinguish between products that thrive and those that falter. This blog outlines what you need to know before you build, invest, or scale.

Why Enterprises Cannot Ignore AI Radiology Regulations

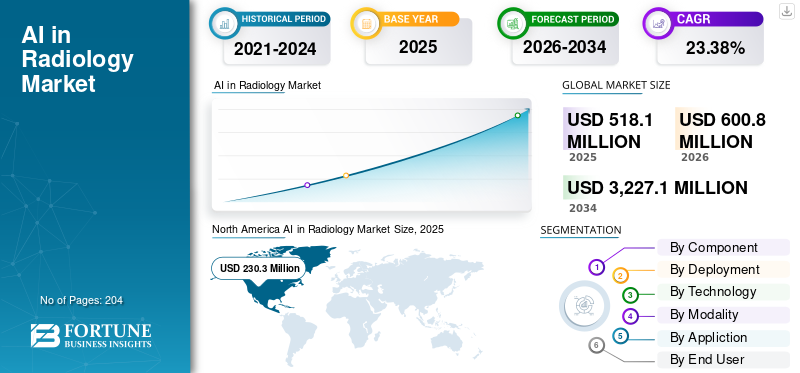

The radiology AI market is growing fast. Forecasts project a CAGR of 24.5% to 24.61% through 2030–2035, depending on the source. That kind of commercial momentum is hard to ignore.

However, growth at this pace brings regulatory scrutiny at the same pace. Compliance is no longer a side concern for enterprises entering this space. It has become a core factor in buying decisions, deployment approvals, and risk management frameworks.

Health systems evaluate AI radiology platforms differently than they did five years ago. Therefore, vendors who cannot demonstrate regulatory readiness struggle to move past the pilot stage, regardless of how strong the clinical results are.

Investments in healthcare technology carry high stakes. For leaders building platforms in this space, regulatory alignment is not just a legal hurdle but a core business strategy that determines your market entry and long-term viability.

1. Regulatory Delays Can Stop Product Launches

AI radiology software often functions as a medical device because it directly influences clinical decisions. If your product team fails to define the intended use and risk controls early, you risk catastrophic timelines.

- Unclear Intended Use: Vague purposes prevent proper risk categorization.

- Missing Validation Evidence: Lack of testing data leads to immediate rejection.

- Weak Documentation: Incomplete build records stall the audit process.

- Unsuitable Clinical Claims: Unsupported promises trigger heavy scrutiny.

- Delayed FDA or CE Review: Mistakes add months to approval timelines.

- Product Rework: Post-development fixes are incredibly expensive.

2. Hospitals May Reject Software That Fails Compliance Review

Enterprise buyers are risk-averse. Even with high accuracy, hospital leadership will reject a purchase if the tool fails internal due diligence from legal, IT, or security teams.

- Vendor Due Diligence: Buyers investigate your company’s stability and quality standards.

- HIPAA Readiness: Every part of the system must protect patient privacy.

- Data Processing Agreements: Legal teams require clear contracts on data handling.

- Cybersecurity Review: Software must not create backdoors for hackers.

- Clinical Evidence Review: Doctors want peer-reviewed proof of real-world success.

- PACS/RIS Compatibility: The tool must fit existing hospital workflows.

3. Poor Governance Can Create Patient Safety Risks

Faulty software directly impacts patient care. If a model performs poorly on specific groups or misses findings, the liability falls on the enterprise.

- Missed Findings: Overlooking critical pathologies like tumors or fractures.

- False Positives/Negatives: Incorrectly flagging or clearing healthy patients.

- Biased Performance: Lower accuracy for certain demographics or ages.

- Model Drift: Performance decay after deployment due to changing data.

4. Weak Security Can Expose Sensitive Imaging Data

Radiology systems handle highly sensitive DICOM metadata and patient records. Insecure controls damage trust and invite massive fines.

- PHI Exposure: Accidental leaks of protected health information.

- Insecure APIs: Vulnerable connections that allow unauthorized data access.

- Cloud Misconfiguration: Improperly secured servers exposing vast image libraries.

5. Lack Of Monitoring Can Turn A Cleared Product Into A Risk

Regulation continues after launch. Changes in hardware or patient populations can degrade your AI performance over time.

- Scanner Changes: New hardware types are producing different image qualities.

- Protocol Changes: Updates in how technicians capture scans.

- Missing Feedback Loops: Failing to track how clinicians use the output.

This is why regulatory readiness must start before development. To understand what enterprises need to build, we first need to define the major regulatory frameworks that affect AI radiology software.

What Regulations Apply To AI Radiology Software?

Navigating the regulatory universe requires a clear understanding of which laws govern specific healthcare technologies.

Identifying the overlap between medical device safety, data privacy, and emerging AI governance is the first step toward a viable market strategy.

1. FDA Medical Device Rules

The FDA oversees software that performs a medical function. Platforms that help detect fractures, prioritize stroke cases, or measure tumors typically qualify as medical devices.

The agency categorizes these tools based on risk, with most radiology AI falling into Class II. This requires a 510(k) clearance to prove the software is as safe and effective as existing solutions.

2. HIPAA Rules

Privacy remains a non-negotiable requirement for healthcare ventures. HIPAA regulations apply when software handles protected health information.

This includes pixel data in a scan and DICOM metadata containing names or birthdates. Implementing technical safeguards like encryption and access controls prevents unauthorized data exposure.

3. EU MDR Requirements

In Europe, the Medical Device Regulation (MDR) sets the bar for clinical safety. Any AI tool with a medical purpose undergoes a rigorous conformity assessment.

This process ensures the software delivers consistent results across different hospital settings. Achieving a CE mark under MDR is essential for scaling across the European market.

4. EU AI Act Requirements

The EU AI Act adds a specific layer of governance for artificial intelligence. Because radiology software influences health outcomes, it is often classified as a high-risk system. This mandate requires high standards for data quality and human oversight.

Systems must remain transparent so a professional can understand and override the AI output.

5. GDPR Requirements

While HIPAA governs the US, GDPR handles data protection in Europe. It dictates how enterprises collect, anonymize, and transfer medical images. For developers, the right to an explanation is a key hurdle.

Systems must be transparent enough that providers can understand how a specific diagnostic conclusion was reached.

6. Cybersecurity Requirements

Modern radiology AI connects to PACS, EHR, and cloud environments. Because these systems are interconnected, they are frequent targets for cyberattacks.

Regulatory bodies require evidence that APIs and network connections are hardened against breaches to protect both patient data and hospital operations.

7. Post-Market Monitoring Requirements

The regulatory journey continues long after launch. Clinical evidence must prove the AI works safely in the real world before market entry. Once deployed, active monitoring identifies performance decay.

Regular audits ensure the software remains accurate as imaging protocols and patient demographics evolve over time.

Establishing a clear understanding of these frameworks ensures that technical development aligns with legal reality. Without this foundation, even the most innovative algorithms face insurmountable barriers to clinical adoption.

AI Radiology Regulatory Requirements At A Glance

A high-level view of the regulatory landscape allows for a strategic assessment of necessary investments and timelines. This summary highlights the core pillars that govern the intersection of medical imaging and artificial intelligence.

Comparison Of Key Regulatory Pillars

| Regulatory Area | What It Covers | Why It Matters For AI Radiology Software |

| FDA Oversight | Intended use, risk classification, clinical claims, and safety evidence | Determines whether software can be marketed for clinical detection, diagnosis, or triage |

| HIPAA | PHI protection, access controls, audit logs, and secure processing | Protects patient info in DICOM files, metadata, reports, and clinical records |

| EU MDR | Medical device classification, CE marking, and technical documentation | Mandatory for any AI radiology software used for medical purposes in the EU |

| EU AI Act | High-risk AI controls, transparency, and human oversight | Adds AI-specific obligations for systems used in clinical environments |

| GDPR | Data processing, consent, minimization, and privacy rights | Governs how imaging data is collected, stored, and reused across Europe |

| Cybersecurity | Secure architecture, encryption, and vulnerability management | Protects connected systems from breaches, ransomware, and unauthorized access |

| Clinical Validation | Model performance, sensitivity, specificity, and reader studies | Proves the AI works safely across scanners, modalities, and patient groups |

| Bias Controls | Dataset diversity, subgroup performance, and error analysis | Reduces performance gaps across populations, sites, and scanner types |

| Update Governance | Version control, revalidation, and change logs (PCCP) | Controls how models change after deployment without creating untracked risk |

| Post-Market Monitoring | Drift tracking, real-world performance, and user feedback | Ensures the software remains safe and reliable throughout its lifecycle |

Consolidating these requirements into a unified compliance framework prevents fragmented development cycles. Success depends on treating these standards not as separate tasks, but as a cohesive foundation for engineering and clinical operations.

When AI Radiology Software Falls Under Medical Device Rules

AI radiology software may fall under medical device rules when it detects findings, supports diagnosis, prioritizes scans, measures abnormalities, or influences clinical decisions.

Defining the scope of a software product early determines the complexity of the regulatory journey. The specific functionality dictates whether a tool is a simple workflow aid or a regulated medical device.

1. Intended Use Decides The Regulatory Path

The intended use statement is the most critical document for any healthcare venture. It defines exactly what the software does and who it is for. If the software moves beyond administrative tasks and begins to interpret data, it enters the regulated space.

- Clinical vs. Administrative: Tools that automate billing or scheduling are generally not medical devices.

- Interpretation: Software that identifies a potential fracture or a lung nodule is strictly regulated.

- Automation: Automating the delivery of a report is lower risk than generating the diagnostic content within that report.

2. Clinical Decision Support Raises Regulatory Risk

When software provides Clinical Decision Support (CDS), it directly impacts how a radiologist treats a patient. This influence increases the regulatory burden because an error could lead to a missed diagnosis or unnecessary surgery.

- Detection and Triage: Flagging urgent cases like intracranial hemorrhages for immediate review.

- Segmentation: Outlining the boundaries of a tumor to calculate its volume.

- Risk Scoring: Providing a probability of malignancy based on imaging features.

3. FDA Pathways For AI Radiology Software

In the United States, the FDA provides three main pathways for market entry. The choice depends on whether a similar product already exists on the market.

- 510(k) Clearance: For products that are “substantially equivalent” to a previously cleared device.

- De Novo: For novel, low-to-moderate risk devices that have no existing predicate.

- PMA (Premarket Approval): Reserved for high-risk devices that are critical to life or health.

4. EU MDR Classification For Medical AI Software

In Europe, the Medical Device Regulation (MDR) requires a CE mark for all medical software. This involves a rigorous technical file review and a clinical evaluation to prove the device meets safety standards.

- Technical Documentation: Detailed records of the software architecture and risk management.

- Clinical Evaluation: A formal report demonstrating that the software performs as claimed in a clinical setting.

Aligning development with these pathways ensures that the platform is built for clearance from the first line of code. Navigating these rules successfully transforms a technical prototype into a marketable clinical asset.

How Regulations Shape AI Radiology Software Architecture

Regulations shape AI radiology architecture by influencing data pipelines, PACS/RIS integration, human review, audit logs, model versioning, cybersecurity, and monitoring systems. Modern systems must prioritize traceability and data integrity to meet global compliance standards.

Engineering a healthcare platform requires more than just high-performance algorithms; it requires an architecture that treats compliance as a structural component. For an enterprise to scale, every technical layer must provide evidence of safety and data protection.

1. Regulatory Planning Must Start Before Development

Compliance cannot be “bolted on” after the code is written. Decisions made during the initial design phase determine the regulatory burden and the ultimate marketability of the platform.

- Intended Use Alignment: The software architecture must strictly reflect the clinical claims the venture plans to make.

- Validation Scope: Engineering teams must build testing environments that mirror diverse, real-world clinical data.

- Risk Controls: Safety mechanisms, such as error-handling and fail-safes, must be baked into the core logic.

- Documentation Requirements: Every design choice and code change requires a traceable record for future audits.

2. Secure DICOM Pipelines Need Built-In Controls

Radiology software handles DICOM files, which are complex and contain highly sensitive patient identifiers. The ingestion and routing pipelines must be hardened to ensure privacy without compromising diagnostic quality.

- Metadata Handling: Systems must strip or encrypt patient names and IDs before the data reaches the AI training or inference layer.

- De-identification: Automated tools must remove burned-in text on images that could expose patient identity.

- Secure Routing: Data moving between the hospital firewall and the cloud must use 3. encrypted tunnels to prevent interception.

3. PACS And RIS Integration Must Support Traceability

For AI to be useful, it must integrate with existing Hospital Information Systems (HIS). Regulatory bodies look for a clear “audit trail” that shows exactly how the AI results influenced the final radiology report.

- Study Routing: Logic that determines which scans are sent to the AI must be transparent and consistent.

- Model Version Records: The system must log exactly which version of the AI model analyzed a specific study.

- Output Timestamps: Tracking when an AI result was generated versus when a human reviewed it is critical for liability and performance audits.

4. AI Outputs Must Be Clear For Radiologists

Regulatory standards like the EU AI Act emphasize “explainability.” An AI tool must provide context so the human professional remains the final authority.

- Visual Aids: Bounding boxes and heatmaps show the radiologist exactly where the AI detected a potential issue.

- Confidence Scores: Providing a probability helps clinicians gauge the urgency or certainty of a finding.

- Structured Findings: Outputs should be formatted to flow directly into the radiologist’s report, reducing manual entry errors.

By aligning architecture with these regulatory demands, enterprises build products that are not just technically sound but legally defensible. This structural integrity is what builds the trust necessary for wide-scale clinical adoption.

How Patient Data Protection Applies To AI Radiology Software

AI radiology software must protect patient data across medical images, DICOM metadata, reports, annotations, access logs, storage systems, and AI training workflows. Compliance requires a combination of technical encryption, strict access controls, and legally mandated de-identification processes.

Data is the lifeblood of AI, but in radiology, that data is highly sensitive. For enterprise leaders, ensuring that imaging pipelines remain compliant with global privacy laws is essential to maintaining institutional trust and avoiding massive legal liabilities.

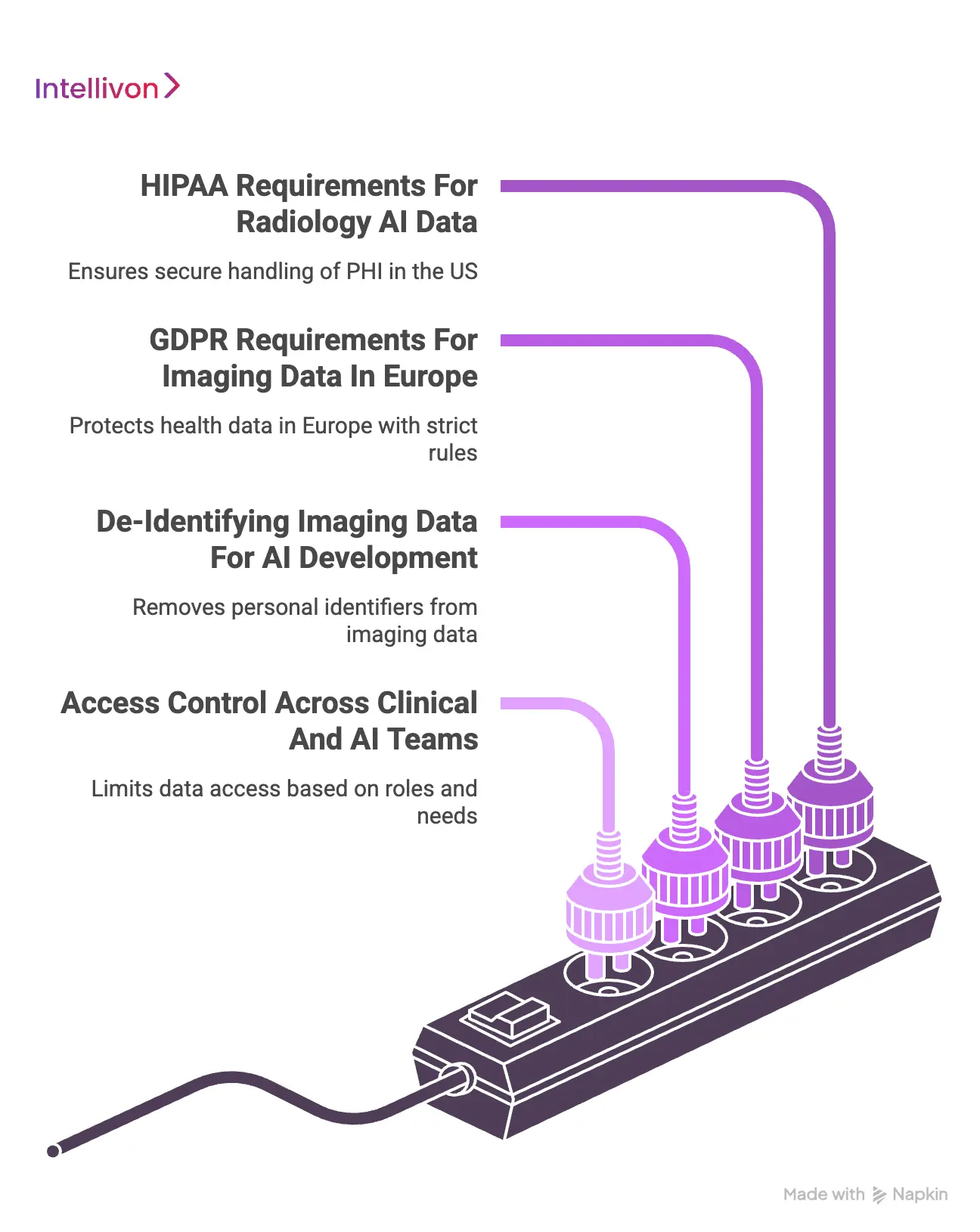

1. HIPAA Requirements For Radiology AI Data

In the United States, HIPAA governs how protected health information (PHI) is handled. For radiology AI, this extends beyond just names and birthdates to include the actual pixel data if it can be linked back to an individual.

- Secure Processing: All data at rest and in transit must be encrypted using industry-standard protocols.

- Business Associate Agreements (BAAs): Enterprises must have legal contracts in place with any cloud provider or vendor handling their data.

- Audit Logs: Every instance of a user or system accessing a patient record must be timestamped and recorded.

- Breach Readiness: A formal plan must exist to notify regulators and patients if data security is ever compromised.

2. GDPR Requirements For Imaging Data In Europe

Europe’s GDPR treats health data as a “special category” requiring the highest level of protection. This regulation impacts how enterprises collect and reuse imaging data for training new AI models.

- Legal Basis: There must be a clear legal reason, such as patient consent or public interest, to process imaging files.

- Data Minimization: Only the specific data points necessary for the AI to function should be collected or stored.

- Right to Erasure: Systems must be designed to identify and delete a specific patient’s data if they withdraw their consent.

- Standard Contractual Clauses: These are required if data is transferred from Europe to servers located in other countries.

3. De-Identifying Imaging Data For AI Development

To use clinical data for research or AI training without violating privacy laws, the data must be de-identified. This is more complex in radiology than in other fields because identifiers can be hidden in multiple layers.

- Metadata Removal: Stripping names, IDs, and hospital locations from the DICOM headers.

- Burned-in Identifiers: Some older scans have patient names written directly into the image pixels; these must be cropped or blurred.

- Report De-identification: Using natural language processing to remove personal details from the text-based radiology reports.

- Re-identification Risk: Ensuring that unique anatomical features (like a facial profile on a head CT) cannot be used to reverse-engineer a patient’s identity.

4. Access Control Across Clinical And AI Teams

Protecting data also means controlling who can see it. A robust architecture limits access based on the specific needs of the staff member, whether they are a treating physician or a software engineer.

- Role-Based Access (RBAC): Defining clear permissions so that engineers only see de-identified data while clinicians see full records.

- Principle of Least Privilege: Users are only given the minimum level of access required to complete their specific task.

- Vendor Restrictions: Third-party developers should never have unfettered access to a hospital’s live patient database.

- Activity Logs and Reviews: Regular audits of who accessed what data help identify potential insider threats or security gaps.

Building these protections into the software’s DNA prevents privacy from becoming a bottleneck to innovation. When data protection is automated and rigorous, it allows the focus to remain on improving clinical outcomes rather than managing legal crises.

How Cybersecurity Requirements Affect AI Radiology Deployment

A security breach in a radiology department can halt hospital operations. For enterprise investors, a robust cybersecurity posture is a competitive necessity, as healthcare systems will not integrate tools that introduce unmanaged risks into their critical infrastructure.

1. Connected Imaging Systems Expand Security Risk

Modern radiology AI does not operate in isolation. It relies on a web of connections to receive images and return results, which significantly expands the potential attack surface.

- PACS and RIS Connections: Direct links to imaging archives must be hardened against unauthorized lateral movement.

- APIs and Cloud Inference: Data moving to external servers for processing must be protected by authenticated and encrypted endpoints.

- Third-Party Integrations: Every plugin or external diagnostic library adds a new layer of dependency and potential vulnerability.

- User Portals: Remote access for radiologists creates entry points that require multi-factor authentication and strict session management.

2. Secure Infrastructure Controls Are Required

Building a secure foundation ensures that even if one part of the system is compromised, the rest remains protected. Regulatory bodies now demand evidence of technical safeguards before approving a deployment.

- Network Segmentation: Keeping the AI processing environment separate from the general hospital network prevents the spread of malware.

- Encryption at Rest and Transit: Every bit of imaging data must be unreadable to anyone without the proper cryptographic keys.

- Secrets Management: Sensitive credentials, such as API keys and database passwords, should never be hard-coded and must be rotated regularly.

- Identity and Access Management (IAM): Granular controls ensure that only authorized services and individuals can trigger an AI analysis.

3. Hospitals May Ask For SBOMs And Vulnerability Controls

Enterprise buyers are increasingly sophisticated. They now require transparency regarding the code and libraries that make up your software to assess their own risk.

- Software Bill of Materials (SBOM): A comprehensive list of all open-source and proprietary components used in the platform.

- Dependency Tracking: Monitoring these components for known vulnerabilities and applying security patches immediately.

- Vulnerability Scanning: Continuous automated testing to find weaknesses in the code before they can be exploited.

- Penetration Testing: Periodic manual assessments where ethical hackers attempt to break into the system to find hidden flaws.

4. Incident Response Must Fit Clinical Environments

Security events in radiology must be handled without compromising patient care. An incident response plan must be coordinated with the hospital’s IT department to ensure clinical continuity.

- Containment Protocols: Rapidly isolating an affected server while allowing the rest of the radiology department to continue scanning patients.

- Breach Workflows: Clear, documented steps for notifying legal and regulatory bodies if patient data is accessed.

- Recovery Procedures: Ensuring that system backups are secure and can be restored quickly to minimize downtime.

- Coordination: Regular drills between the software vendor and the hospital security team to ensure everyone knows their role during a crisis.

By prioritizing these security pillars, enterprises protect their reputation and their ability to operate in high-stakes clinical environments. A secure architecture is a resilient one, capable of delivering reliable AI insights even under threat.

Why Human Oversight And AI Governance Matter

Human oversight, bias control, and governance help ensure AI radiology software remains clinically safe, explainable, fair, reviewable, and accountable after deployment. Effective governance bridges the gap between algorithmic potential and real-world clinical safety.

Algorithmic excellence is only half of a successful radiology platform. For enterprise leaders, the other half is a governance structure that ensures the technology serves the clinician rather than replacing their judgment. This human-centric approach is a fundamental requirement for regulatory bodies and hospital ethics boards alike.

1. Radiologists Must Stay In The Review Loop

Current regulations treat AI as a supportive tool, not a final decision-maker. This means the software architecture must facilitate a “human-in-the-loop” workflow where a qualified professional validates every insight before it reaches a patient’s record.

- Review Checkpoints: The system should require a formal sign-off from a radiologist for any AI-generated finding.

- Override Options: Clinicians must have the ability to easily dismiss or correct an AI’s output if it is incorrect.

- Clinical Accountability: Documentation must clearly state that the final diagnostic responsibility rests with the human reviewer.

- Manual Escalation: A clear path for a radiologist to flag a suspected model failure for technical review.

2. AI Outputs Need Explainability For Clinical Use

A “black box” model is a significant regulatory and clinical risk. To build trust and satisfy transparency requirements, the software must provide the “why” behind its findings. This allows a professional to verify the AI’s logic rather than blindly following a result.

- Visual Markers: Bounding boxes and heatmaps that highlight the specific pixels or regions of interest.

- Confidence Scores: Numerical representations of how certain the model is about a particular finding.

- Supporting Context: Displaying similar historical cases or reference data that the AI used to reach its conclusion.

- Model Limitations: Clear disclosures within the interface about what the AI can and cannot detect.

3. Bias Testing Reduces Unsafe Performance Gaps

AI models can develop blind spots based on the data they were trained on. Regulatory bodies now require proof that a radiology tool performs consistently across all patient groups, regardless of their background or the hardware used to scan them.

- Demographic Imbalance: Ensuring accuracy does not drop based on a patient’s age, gender, or ethnicity.

- Scanner Bias: Verifying that a model trained on one brand of MRI machine works just as well on a different manufacturer’s equipment.

- Protocol Bias: Testing the AI against various imaging settings and radiation doses used across different hospital sites.

- Subgroup Performance: Monitoring for higher error rates in specific disease variations or patient populations.

4. Governance Controls Keep AI Use Accountable

Accountability requires a digital paper trail. Governance is the process of capturing every interaction between the clinician and the AI to identify trends, improve the model, and manage liability.

- Review Logs: Automated records showing which findings a radiologist accepted and which they rejected.

- Override Comments: Allowing clinicians to type a brief note explaining why they disagreed with the AI.

- Escalation Records: Tracking how many times a model was flagged for poor performance in a specific clinical setting.

- Corrective Actions: Documenting the steps taken to retrain or update the model based on real-world feedback.

Implementing these governance controls transforms a standalone algorithm into a reliable clinical system. By prioritizing oversight and fairness, enterprises create a platform that is not only compliant but also indispensable to the radiologists who use it.

What Documentation Hospitals And Regulators Expect

A well-structured technical file is the bridge between a promising prototype and a cleared medical product.

For those investing in this space, documentation serves as the ultimate proof of quality, providing regulators and hospital boards with the transparency needed to authorize clinical use.

1. Intended Use And Product Claims Documentation

The foundation of any regulatory submission is the definition of what the software is designed to do. This document sets the boundaries for your legal liability and dictates which tests the system must pass to be considered successful.

- Intended Users: Explicitly defining whether the tool is for general radiologists, sub-specialists, or emergency room physicians.

- Clinical Claims: Precise statements about what the AI detects, such as identifying a pulmonary embolism or measuring a lung nodule.

- Limitations and Contraindications: Documenting scenarios where the AI should not be used, such as with specific patient ages or poor-quality scans.

- Workflow Role: Explaining if the tool acts as a concurrent reader, a second reader, or a triage system for prioritizing urgent cases.

2. Risk Management And Clinical Safety Documentation

Regulators prioritize safety over innovation. You must demonstrate a systematic approach to identifying potential failures and explain how the software architecture prevents these failures from harming patients.

- Hazard Analysis: Identifying what could go wrong, such as the AI missing an obvious finding or generating a false alarm.

- Failure Modes: Analyzing the technical reasons for potential errors, such as low-quality image inputs or network latency.

- Risk Controls and Mitigation: Detailed records of the safety features built into the code to catch and resolve errors.

- Residual Risk: A formal acknowledgment of the remaining risks after all controls are implemented, proving they are acceptable for clinical benefit.

3. Validation And Performance Documentation

This section provides the raw evidence that the AI works as promised. It requires a deep dive into the data used to train and test the model, ensuring the results are statistically significant and free from major bias.

- Dataset Transparency: Documenting the source, diversity, and volume of the imaging data used during development.

- Reader Studies: Results from trials where radiologists used the AI to show it actually improves their diagnostic accuracy or speed.

- Subgroup Analysis: Performance metrics broken down by patient demographics and scanner manufacturers.

- Clinical Evidence: Summaries of peer-reviewed studies or internal clinical trials that validate the final product.

4. Technical And Security Documentation

Enterprise IT departments focus on how the software will live within their existing network. This documentation proves that the system is stable, secure, and easy to maintain over several years.

- Architecture Diagrams: High-level visual maps showing how data moves from the hospital scanner to the AI engine and back.

- Model Design and Inference: Documentation of the neural network architecture and how the system processes individual images.

- Cybersecurity Controls: Records of encryption methods, multi-factor authentication, and the results of recent penetration tests.

- Release History: A traceable log of every software update, including what was changed and how those changes were validated.

By maintaining rigorous documentation, enterprises eliminate the friction often found during the final stages of a deal. This level of transparency transforms the software from a black box into a trusted clinical asset that meets the highest professional standards.

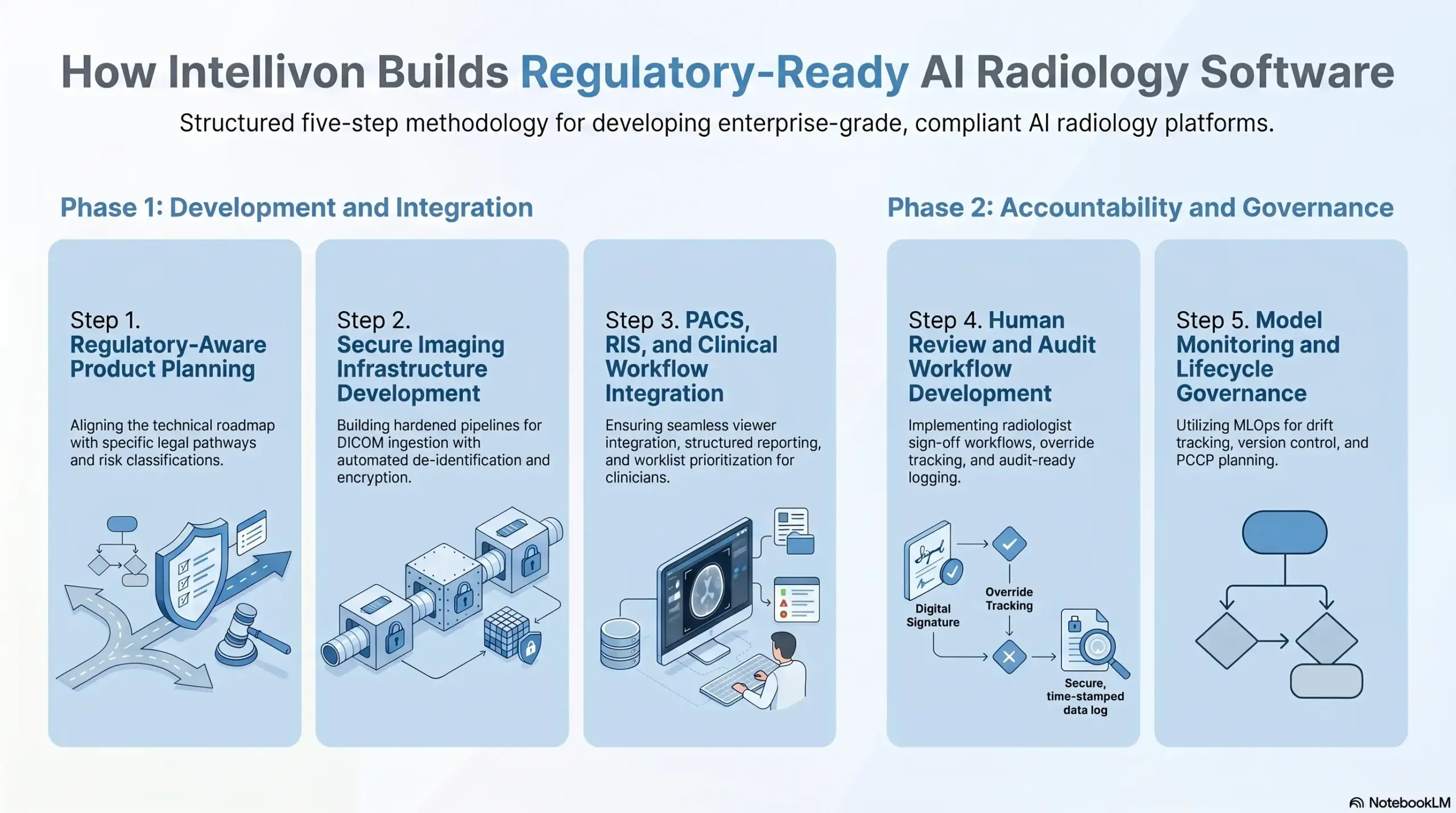

How Intellivon Builds Regulatory-Ready AI Radiology Software

At Intellivon, we transform high-level clinical concepts into enterprise-grade diagnostic platforms. Our methodology treats regulatory requirements not as a final hurdle, but as the foundational architecture for every line of code we write.

We follow a structured, five-step process to ensure every solution is market-ready and clinically defensible.

Step 1. Regulatory-Aware Product Planning

Success begins with aligning the technical roadmap with specific legal pathways. We evaluate the intended use and risk classification before development starts to prevent costly pivots later.

Intended Use Definition: We help define the clinical boundaries of the software to determine if it requires FDA 510(k) clearance or an EU MDR CE mark.

- Risk and Workflow Mapping: Our team identifies potential clinical hazards and maps the AI role within the existing radiologist workflow.

- Pathway Awareness: We architect the system to meet the specific documentation and validation standards required for your target global markets.

Step 2. Secure Imaging Infrastructure Development

We build hardened pipelines designed to handle sensitive DICOM data with zero compromise on privacy. Our infrastructure ensures that imaging data is protected from ingestion through to long-term storage.

- DICOM Ingestion and Routing: We implement secure protocols for moving high-resolution scans from hospital networks to processing engines.

- Automated De-identification: Our systems automatically strip metadata and remove burned-in patient identifiers to ensure HIPAA and GDPR compliance.

- Encrypted Data Lifecycle: Every byte of data is encrypted both in transit and at rest, utilizing enterprise-grade secrets management.

Step 3. PACS, RIS, And Clinical Workflow Integration

A diagnostic tool is only valuable if it is used. We ensure our AI solutions integrate effortlessly into the existing hospital ecosystem, providing results exactly where clinicians need them.

- Seamless Viewer Integration: AI outputs, including heatmaps and bounding boxes, appear directly within the radiologist’s primary viewing software.

- Structured Reporting: We generate report-ready findings that flow into the RIS, reducing manual entry errors for the physician.

- Worklist Prioritization: Our integration logic helps triage urgent cases, ensuring critical findings move to the top of the clinical queue.

Step 4. Human Review And Audit Workflow Development

Compliance requires that a human remain the final authority. We develop the interfaces and logging systems necessary to prove that every AI insight was reviewed and validated by a professional.

- Radiologist Sign-off Workflows: We build mandatory review checkpoints into the interface to ensure clinical accountability.

- Override Tracking: Our systems log whenever a clinician disagrees with the AI, capturing valuable feedback for model improvement.

- Audit-Ready Logging: Detailed user logs and case histories provide a transparent paper trail for regulatory audits and hospital due diligence.

Step 5. Model Monitoring And Lifecycle Governance

Regulation continues long after the product is live. We implement the MLOps infrastructure needed to track performance, manage updates, and ensure the AI remains safe over its entire lifecycle.

- Drift and Performance Tracking: Automated dashboards monitor for “model drift” to ensure diagnostic accuracy stays consistent across different scanner types.

- Version Control and Rollbacks: We maintain strict governance over model updates, allowing for seamless transitions and rapid rollbacks if performance issues occur.

- PCCP Planning: Our team helps implement Predetermined Change Control Plans, allowing for model improvements without the need for constant regulatory resubmissions.

By embedding these steps into our core development cycle, Intellivon delivers a complete, regulated infrastructure. This strategic approach ensures that your investment is protected, your timelines are predictable, and your platform is ready for the demands of modern enterprise healthcare.

If you are ready to build a diagnostic platform that meets the highest standards of safety and security, contact Intellivon today to start your strategic consultation.

How Much Does Regulatory-Ready AI Radiology Software Cost?

The cost to build regulatory-ready AI radiology software depends on how deeply the system supports clinical imaging workflows.

A simple AI prototype costs less, but a hospital-ready platform needs secure DICOM pipelines, PACS/RIS integration, radiologist review workflows, audit logs, access controls, validation support, cybersecurity, and post-launch monitoring.

AI Radiology Software Cost Breakdown

| Software Scope | Estimated Cost | Best For |

| Basic AI Radiology MVP | $50,000 – $90,000 | Startups validating one focused AI imaging use case |

| Mid-Level AI Radiology Platform | $90,000 – $180,000 | Healthcare companies preparing for pilot deployment |

| Enterprise AI Radiology System | $180,000 – $280,000 | Imaging enterprises building scalable, integration-ready platforms |

What Factors Increase AI Radiology Software Cost?

Several factors can increase the cost of regulatory-ready AI radiology software. The biggest drivers are usually clinical workflow complexity, model scope, integration depth, validation requirements, and security expectations.

Key cost factors include:

- Number of imaging modalities

- AI model complexity

- DICOM data handling needs

- PACS/RIS/EHR integration

- Radiologist review workflow depth

- Clinical validation support

- HIPAA and data security controls

- Audit trail requirements

- Model monitoring needs

- Cloud, on-prem, or hybrid deployment

- Vendor and hospital IT requirements

- Documentation and governance needs

As a result, two AI radiology platforms with similar model features can still have very different costs. A tool used only for internal review will cost less than a platform designed for hospital integration, radiologist workflows, and long-term monitoring.

At Intellivon, we build AI radiology software with these controls planned into the architecture early. This helps enterprises reduce rework, prepare for hospital review, and build platforms that can move from prototype to real clinical deployment with fewer risks.

Conclusion

Effective navigation of the regulatory landscape is the definitive factor in the success of any AI radiology platform. Aligning development with global standards ensures clinical safety, secures enterprise trust, and accelerates market entry.

By prioritizing these requirements early, stakeholders transform complex legal obligations into a strategic competitive advantage. Building with this compliance-first mindset ensures that innovative diagnostic tools deliver consistent, reliable value within the high-stakes environment of modern healthcare.

Build AI Radiology Software With Intellivon

At Intellivon, we develop AI radiology software for hospitals, diagnostic imaging chains, teleradiology providers, radiology SaaS companies, healthcare enterprises, and medical imaging startups.

Our engineering approach focuses on building software that fits real radiology workflows, protects sensitive imaging data, and supports enterprise deployment from the first architecture decision.

A. Building Secure DICOM And Imaging Data Pipelines

We build secure imaging pipelines that move scans from modalities, PACS, or storage systems into AI workflows safely.

This includes DICOM ingestion, metadata handling, de-identification support, encrypted transfer, image preprocessing, secure storage, and controlled data routing across the platform.

B. Integrating AI With PACS, RIS, And Clinical Workflows

We connect AI radiology software with the systems radiologists already use, including PACS, RIS, EHR, reporting tools, viewers, and hospital IT environments.

This allows AI outputs to appear inside practical clinical workflows through structured findings, prioritization flags, review queues, result routing, and reporting support.

C. Securing AI Inference, Access, And Patient Data

We design privacy and security controls around PHI, imaging metadata, AI outputs, reports, logs, annotations, and system integrations.

This includes role-based access, least-privilege permissions, authentication controls, encrypted storage, secure APIs, monitoring, vendor controls, and incident response readiness.

D. Scaling AI Radiology Platforms For Enterprise Deployment

We design AI radiology software for real healthcare environments, not isolated demos.

Whether the platform needs cloud, on-prem, or hybrid deployment, Intellivon builds the infrastructure needed for secure scaling, hospital review, multi-site workflows, and long-term product growth.

If you are planning to build AI radiology software for clinical imaging workflows, contact Intellivon to design a secure, scalable, and regulatory-aware platform from the first architecture decision.

FAQs

Q1. Does AI radiology software need FDA clearance?

A1. AI radiology software may need FDA clearance if it detects findings, prioritizes scans, measures abnormalities, supports diagnosis, or influences clinical decisions. The requirement depends on intended use, clinical claims, risk level, and whether the software works as decision support or only handles non-clinical workflow automation.

Q2. Can AI radiology software replace radiologists?

A2. AI radiology software is usually designed to assist radiologists, not replace them. Most enterprise systems support triage, detection, segmentation, reporting, or workflow prioritization. Radiologists still review findings, apply clinical judgment, approve reports, and remain central to patient care and accountability.

Q3. What makes AI radiology software hard to deploy in hospitals?

A3. Hospital deployment is difficult because AI radiology software must fit PACS/RIS workflows, protect imaging data, support radiologist review, provide clear outputs, pass security checks, and maintain audit trails. Even strong AI models can fail adoption if integration, usability, compliance, and monitoring are weak.

Q4. How should AI radiology software protect patient imaging data?

A4. AI radiology software should protect patient data through secure DICOM handling, de-identification, encryption, access control, audit logs, vendor controls, and monitored data transfers. PHI can appear in image metadata, reports, annotations, model outputs, logs, exports, and support workflows, so every data flow needs review.